Study finds significant under-reporting of safety data on US nursing home comparison website – will the Australian Government move away from asking providers to self-report data?

The data used by US Government website Nursing Home Compare to report on residents’ safety related to falls may be “highly inaccurate”, according to research by the University of Chicago. The site – which is used by families to research...

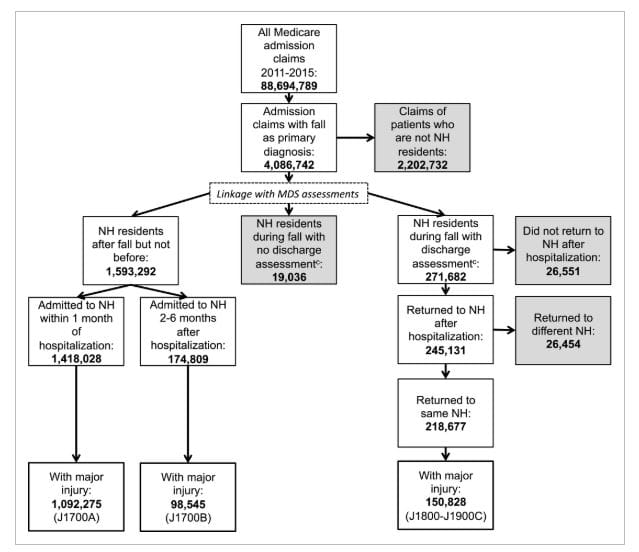

The data used by US Government website Nursing Home Compare to report on residents’ safety related to falls may be “highly inaccurate”, according to research by the University of Chicago. The site – which is used by families to research aged care options – critically formed the basis of the Royal Commission’s first research paper on staffing levels in Australia – suggesting that data may also be open to scrutiny. Prachi Sanghavi, an assistant professor in public health sciences, uncovered major discrepancies between the falls calculations used for the website’s ratings and the actual Medicare claims for falls by residents between 2011 and 2015 (pictured above). Only 57.5% of falls were accounted for in the Nursing Home Compare’s Minimum Data Set (MDS), which is self-reported by nursing homes. Reporting rates were higher for white residents (59%) than non-white residents (46%) and for long-term stays (62.9%) than short-term stays (47.1%). In short, the rate of actual falls is much higher than the ratings on the website – which is run by the Centers for Medicare & Medicaid Services – would represent to consumers and their families.

“This is a substantial amount of underreporting and is deeply concerning because without good measurement, we cannot identify nursing homes that may be less safe and in need of improvement,” Ms Sanghavi said.

The researcher, who identified 150,828 major injury falls in Medicare claims from a data set of nearly 88.7 million claims, added that they were as conservative as possible in their calculations so there would be little argument about whether a fall should have been reported – meaning these numbers could be even higher. It’s not the first time that Nursing Home Compare has come under fire for using self-reported data.

Five star homes failing to meet standards

In 2014, a New York Times investigation found serious deficiencies in American nursing homes rated five stars on the website.

“I found it odd that Nursing Home Compare would use self-reported data,” Ms Sanghavi added. “Having worked with Medicare claims data, I thought I could use it to study MDS reporting. The Medicare claims we used are hospital bills. They want to get paid and should not have an interest in nursing home public reporting. That's why they are a more objective source than the self-reported data from nursing homes.”

Based on the results, the researcher wants the Centers for Medicare & Medicaid Services to change its evaluation criteria for falls to an objective source, such as claims data. My point? I have two to make. The first is that Australia has had its own mandatory quality indicators program in place since 1 July 2019 – which also relies on self-reported data. Providers must collect and provide information on three clinical quality indicators – pressure injuries, use of physical restraints and unplanned weight loss (falls is due to be added soon along with the use of chemical restraints). The first round of data from the collection between July and September 2019 suggests instances of these indicators is low.

Check out the graph above on pressure injuries – while 5,801 Stage 1 pressure injuries were observed, this worked out as only 0.36 days of observations per 1,000 days that a Government subsidy was received for a resident. For Stage 4 injuries, the 282 observations equate to just 0.02 observations per 1,000 days. The same low occurrence was noted for physical restraints and unplanned weight loss – for example, 63,217 observations of physical restraints represents 3.95 days per 1,000 days. But the US research raises the question of similar inaccuracies here – how long until an Australian academic compares the hospital admissions for residents and determines that self-reporting is not a reliable source of data? With the headlines from the Royal Commission and Earle Haven last year, the Government is already on tenterhooks about safety in aged care – and could look at independently sourcing the data through increased assessment contacts.

Royal Commission data in doubt

Secondly and perhaps more pertinently, the findings throw light on the accuracy of the data on the US site – which had formed the basis of the Royal Commission’s first research paper ‘How Australian Residential Aged Care Staffing Levels Compare with International and National Benchmarks’ by the Australian Health Services Research Institute (AHSRI) at the University of Wollongong. That research had put forward that over 50% of Australia’s 180,000 aged care residents are in homes with staffing levels that would be rated 1 to 2 stars (unacceptable levels of staffing) under the United States system. This data related to falls, not staffing – but it makes you wonder: how accurate is the US star rating system? The Commission has been leaning heavily towards a star rating system in its thinking – with the US system held up as the preferred model for Australia. Its first consultation paper on the future design of the aged care system is pushing for an entry point to the system, that would offer “meaningful information about quality and cost, and a search function that helps people compare and select providers” – which could easily be provided to consumers by a star rating system. It all points to a need for more accurate data both here and in the States – and with it, a higher workload and more red tape for providers.